Disability Claims Insider Web Platform

Comprehensive quality assurance services that helped a U.S.-based insurance company reduce operational and integration risks and reduce regression testing time from 3 days to 4 hours

ABOUT the project

- Client:

- EdTech and Consulting company

- Location:

-

USA

|Austin, Texas

- Company Size:

- 201+ Employees

- Industry:

- E-Learning

- Solution:

- Legal

Services:

Technologies:

As part of its digital transformation strategy, the client initiated the development of a web-based platform designed to automate and centralize key business processes related to disability benefit support. The solution serves as a unified hub where benefit recipients and their assigned coaches can interact directly, manage documentation, track progress, and follow structured action plans within a single digital environment.

What’s remarkable is that they’re always on top of deliverables, timelines, and bug-fixes. Their transparency and responsive communication stand out, as does their fast remediation of bugs and committed resources.

Customer

Our customer is an education‑based coaching company that helps individuals understand and navigate the disability claims process. The company plays a pivotal role in connecting potential benefit recipients with medical professionals who conduct disability evaluations and provide credible Independent Medical Opinions & Nexus Statements for a wide spectrum of disability conditions. They offer a comprehensive range of expert-level educational resources through their websites and programs to ensure that benefit applicants are well-informed and supported throughout their journey.

Business Challenge

The project presented significant quality and operational challenges, as the platform was already live and actively used, meaning any defect or regression could directly impact users relying on it for disability benefit support. The client needed to ensure robust regression and end-to-end testing across complex functional and UI flows, but this effort was constrained by tight release timelines and heavy reliance on third-party integrations, which initially limited overall test coverage to roughly 50 percent. With frequent deployments and continuous feature updates, manual testing alone proved insufficient and unsustainable, creating a clear need for a more scalable, automation-driven QA strategy capable of maintaining stability without slowing delivery.

Why Leobit

The customer selected Leobit as a delivery partner for our experience developing complex, production-critical systems and our ability to build structured QA processes tailored to evolving environments. The QA team was expected not only to identify defects but also to stabilize the platform, formalize testing practices, and support predictable releases in a live production setting.

Project

in detail

When Leobit joined the project, the platform was already live but unstable. Users experienced defects, inconsistent behavior, and performance issues that affected daily operations. Before introducing any new functionality, the system required immediate stabilization.

During the technical audit and refactoring phase led by the development team, QA engineers played a parallel and equally critical role. As approximately 80% of redundant or unused code was removed and components were restructured, QA team focused on validating the integrity of core workflows. Every refactored module required verification to ensure that business logic remained intact and that no hidden dependencies were broken during cleanup.

Frequent changes in product ownership required QA to act as a knowledge anchor. By consolidating requirements, documenting business logic, and aligning expectations between teams, QA contributed to continuity and reduced the impact of shifting priorities.

We followed a Scrum-based framework with sprint planning, reviews, and demos. QA engineers participated from the earliest stages of each sprint, reviewing requirements, identifying risks, and defining acceptance criteria together with stakeholders. Regular demos supported early feedback and validation of real business scenarios. Acceptance testing was not treated as a final checkpoint but as an ongoing collaborative process that ensured alignment between development output and stakeholder expectations.

With scheduled production releases at the end of each sprint, QA became directly involved in release readiness decisions. Each deployment was preceded by smoke testing and targeted regression of business-critical flows. Automated tests executed within the CI/CD pipeline provided additional assurance before go-live.

Since the platform was actively used, QA monitored system behavior after deployment, reviewed logs, and verified critical user journeys in production. This allowed the team to detect anomalies early, investigate issues quickly, and minimize user impact.

Quality assurance extended beyond pre-release testing into continuous production monitoring. QA specialists reviewed application logs, background job execution, and integration logs from external systems to validate end-to-end data consistency. This proactive, production-aware approach enabled early detection of synchronization issues, performance anomalies, and unexpected behavior. It significantly reduced incident response time and helped maintain user trust in the platform.

QA strategy during the stabilization phase

The stabilization phase required a highly focused and risk-driven approach. QA team concentrated on identifying critical defects, verifying high-impact user journeys, and ensuring consistent behavior across roles. Instead of attempting broad coverage, the team prioritized business-critical scenarios that directly affected real users and financial operations.

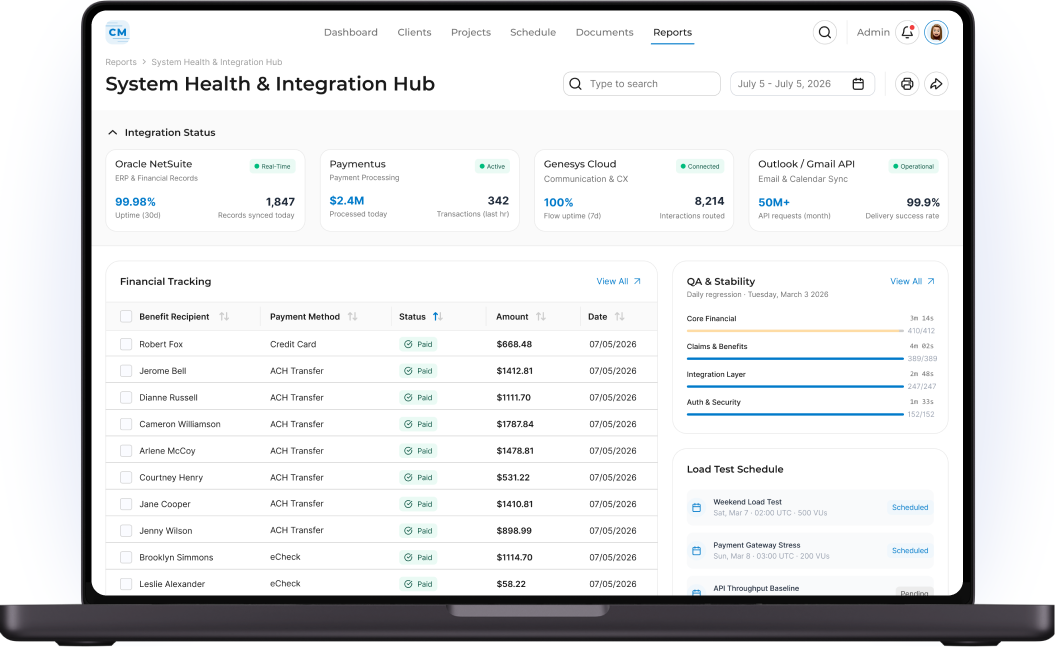

Testing activities included deep validation of communication flows, task lifecycle management, payment tracking logic, and document handling processes. Integration testing was particularly important during this stage, as refactoring could easily disrupt data exchange with external systems. QA verified synchronization between the platform and Genesys Cloud, Outlook Mail, Oracle NetSuite, and Paymentus to ensure data consistency and operational reliability.

Given incomplete documentation, QA specialists actively collaborated with stakeholders to clarify requirements and reconstruct intended system behavior. This helped reduce ambiguity and align testing efforts with actual business needs.

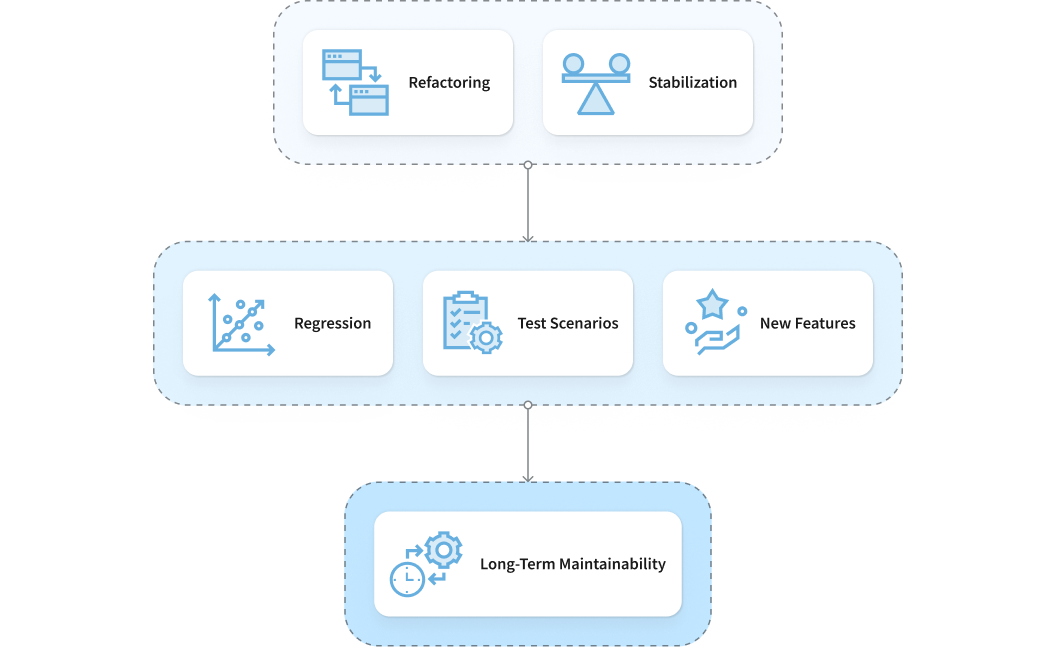

Transition to a stable platform version

Once refactoring and stabilization efforts were completed, the platform reached a stable baseline. This milestone allowed the team to move from reactive defect handling to structured and preventive quality assurance.

QA strategy evolved accordingly. The focus shifted toward strengthening regression coverage, formalizing test scenarios, and preparing the system for safe introduction of new features. Our QA specialists implemented a more systematic approach to test design and documentation to support long-term maintainability.

Continuous delivery and sprint-based releases

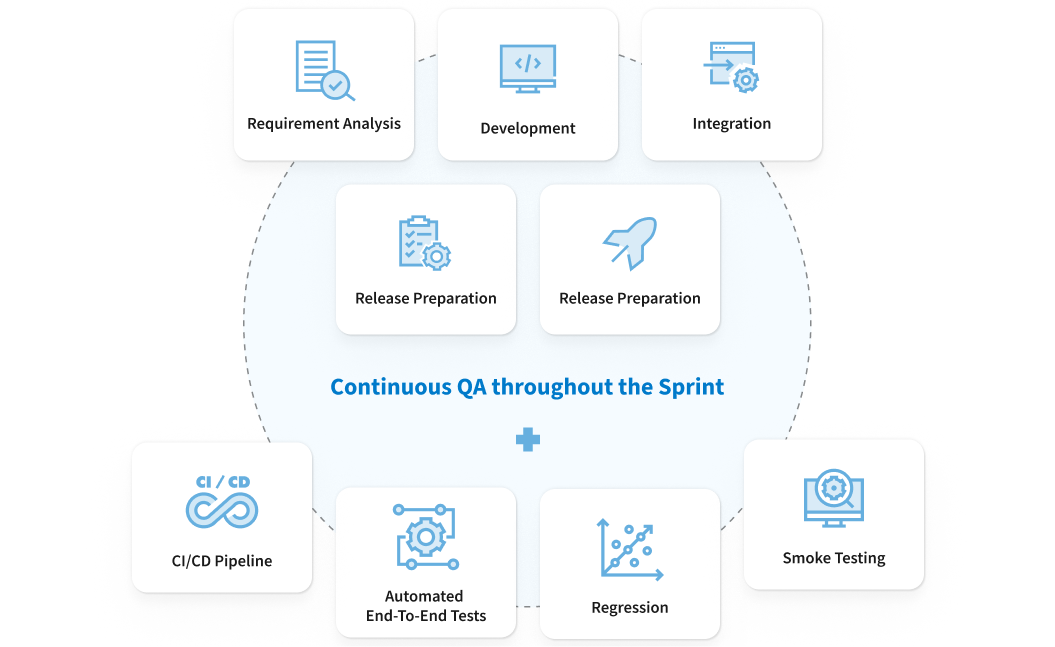

As the project matured, the delivery model transitioned to sprint-based releases with production deployments at the end of each sprint. This required QA to continuously operate throughout the sprint lifecycle rather than as a final validation step.

Testing activities began during requirement analysis and continued through development, integration, and release preparation. QA validated new functionality, assessed regression risks, executed integration checks, and ensured that release readiness criteria were met before deployment.

Regression and smoke testing became mandatory gates before every production release. To protect key business flows, we also integrated automated end-to-end tests into the CI/CD pipeline. This reduced manual overhead while maintaining confidence in frequent deployments.

Automated end-to-end testing

The QA team introduced a dual-pipeline automation approach designed to maximize execution efficiency while maintaining deep functional coverage. Automated tests were fully integrated into the CI/CD pipeline and configured to run automatically after each deployment to the QA environment. This ensured that new builds were validated immediately and that regressions were detected early, before reaching production.

On a daily basis, the framework executes approximately 1,200 standard automated tests covering core business scenarios, integrations, and critical user journeys. In addition, around 140 specialized tests focused on advanced filtering logic and database load behavior are executed during weekends. This scheduling strategy allows the team to validate performance-sensitive and resource-intensive scenarios without impacting weekday delivery speed. The implementation of this structured automation strategy provided higher release confidence, reduced regression risks, and strengthened stakeholder trust in the system’s stability.

Technology Solutions

- Used Playwright to enable reliable cross-browser end-to-end and UI test coverage.

- Used Azure DevOps as the central platform for managing requirements, tracking defects, and maintaining traceability across the project.

- Adopted Postman for API testing and back-end validation.

- Database-level validation was performed using SQL and query-based checks against MySQL and Azure Cosmos DB to ensure that data was correctly created, updated, and synchronized across the system.

- Validated Azure Storage to ensure that all related files were properly generated, stored, updated, and accessible as part of end-to-end workflows.

- Using Azure Application Insights to analyse system errors, failed requests, and integration issues.

Value Delivered

- Improved alignment with business needs through close stakeholder collaboration and acceptance validation.

- Reduced operational and integration risks due to risk-based testing and end-to-end workflow validation.

- Regression testing time reduced from 3 days to 0.5 –1 day.